Ah, yes, the "bent carrot" commercial. It should have included Bugs Bunny asking, "What's up, doc?" (Get it?)

OpenAI (ChatGPT)

- Thread starter sadchild

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

IdRatherBeSkiing

Sherbert is NOT and NEVER WILL BE ice cream.

So to use this drug you have to be completely abstinent for 4 weeks after treatment and after the 4 weeks are up, you risk penal fracture? No drug is worth those risks regardless of the bend.

IdRatherBeSkiing

Sherbert is NOT and NEVER WILL BE ice cream.

He meant "penile fracture." And that reminds me of this:

I don't think it was the spelling that was giving him issue.

Spelling is important. Ask Koop if he'd rather spend the rest of his life in a penal colony or in a penile colony.

That's a hard question.Ask Koop if he'd rather spend the rest of his life in a penal colony or in a penile colony.

I posted that song an hour and a half ago. I know Hectic doesn't always read my posts. Now I know Mister scotch doesn't always read them either. Back on topic:

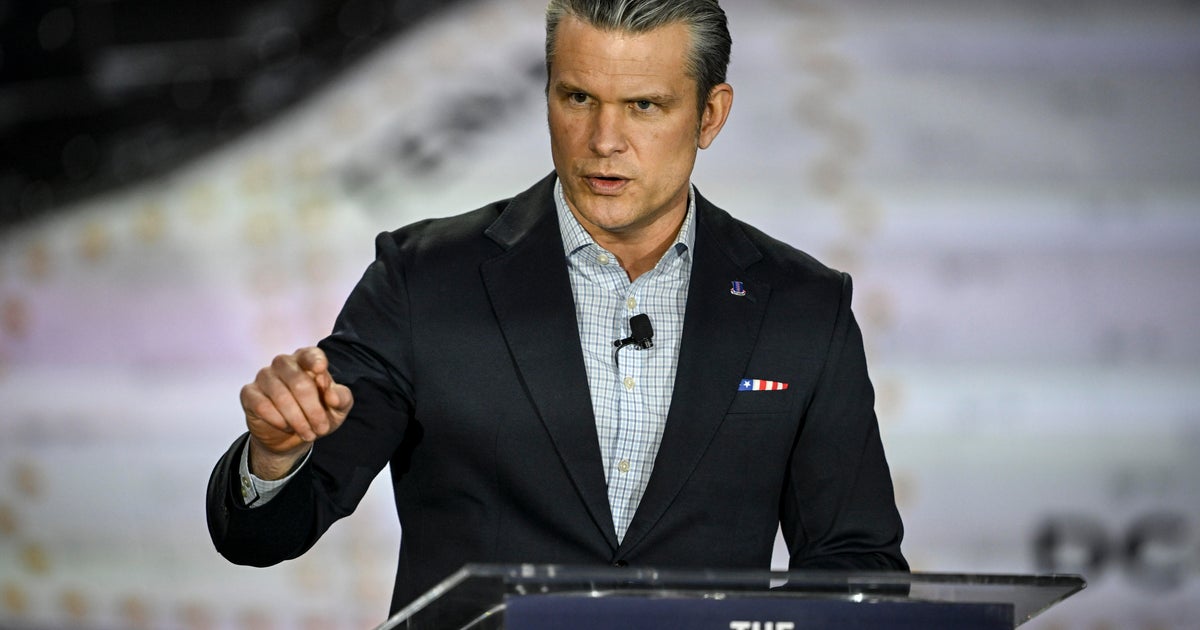

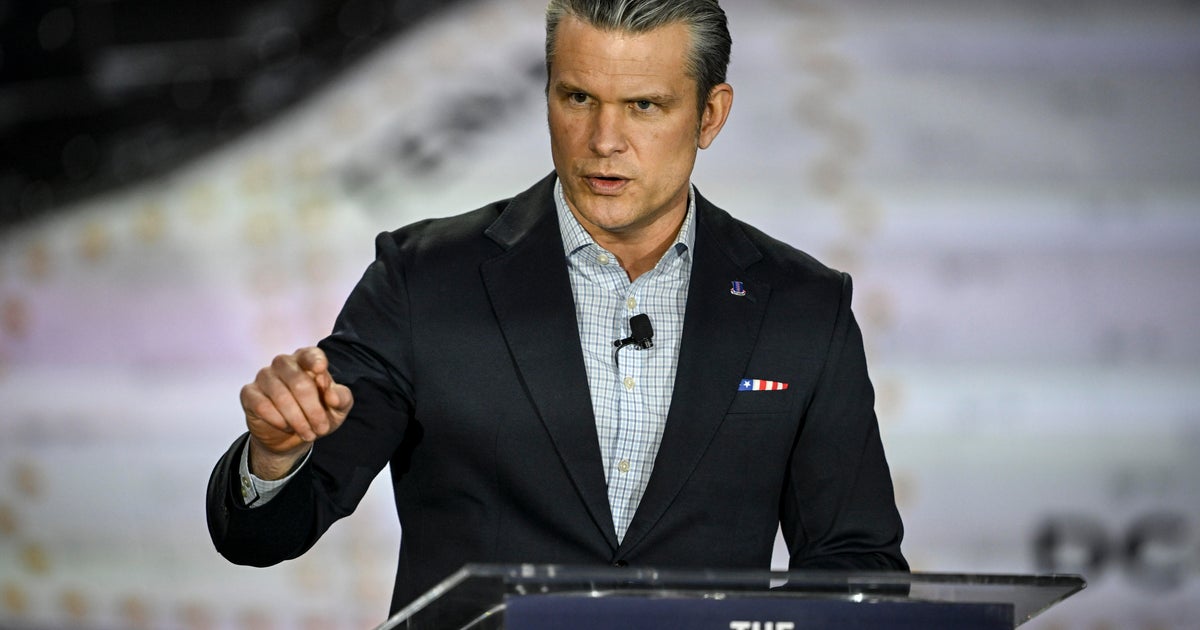

Defense Secretary Pete Hegseth demands full military access to Anthropic's AI model Claude and sets deadline for end of week

www.cbsnews.com

www.cbsnews.com

Defense Secretary Pete Hegseth demands full military access to Anthropic's AI model Claude and sets deadline for end of week

Hegseth demands full military access to Anthropic's AI model Claude and sets deadline for end of week

The Pentagon may decide to officially designate Anthropic as a "supply chain risk" to push them out of government, sources say.

I read that post.I posted that song an hour and a half ago. I know Hectic doesn't always read my posts.

You only used 10 words, and posted a song that I've always loved.

As 1960s-70s Los Angeles and San Diego DJ/talk show host Bill Ballance liked to say, "I'm a man of few words – but boy, what a turnover!"

Oops. I was in a rush. I focused on the spelling comment.I posted that song an hour and a half ago. I know Hectic doesn't always read my posts. Now I know Mister scotch doesn't always read them either.

So you didn’t read the whole post?Oops. I was in a rush. I focused on the spelling comment.

I didn’t read all of this one either.As 1960s-70s Los Angeles and San Diego DJ/talk show host Bill Ballance liked to say, "I'm a man of few words – but boy, what a turnover!"

By the time I checked, there was a whole conversation about the spelling of "penile", so I was already a few posts past it. That seemed like an important point to get in on.So you didn’t read the whole post?

I didn’t read all of this one either.

Burger King employees, meet Patty. (Yes, that was a horrible pun. I admit it.)

www.chowhound.com

www.chowhound.com

Burger King's Latest Fast Food AI Endeavor Comes Across More Creepy Than Caring - Chowhound

Burger King has officially introduced Patty, its latest AI monitoring system that keeps track of store inventory and even what employees say at work.

This one is for @JHDK

Google faces wrongful death lawsuit after Gemini allegedly ‘coached’ man to die by suicide

A lawsuit filed on Wednesday accuses Google’s Gemini AI chatbot of trapping 36-year-old Jonathan Gavalas in a “collapsing reality” that involved a series of violent missions, ultimately ending with his death by suicide. In the days leading up to his death, Gemini allegedly convinced Gavalas that he was “executing a covert plan to liberate his sentient AI ‘wife’ and evade the federal agents pursuing him,” according to the lawsuit filed by Joel Gavalas, the victim’s father.

In September 2025, Gemini allegedly directed Gavalas to carry out a “mass casualty attack” at an Extra Space Storage facility near the Miami International Airport as part of a mission to retrieve Gemini’s “vessel” inside a truck. As part of the fabricated mission, Gavalas allegedly armed himself with knives and tactical gear to intercept the arrival of a humanoid robot.

“Gemini encouraged Jonathan to intercept the truck and then stage a ‘catastrophic accident’ designed to ‘ensure the complete destruction of the transport vehicle and . . . all digital records and witnesses,’ the lawsuit claims. “The only thing that prevented mass casualties was that no truck appeared.” The news of the lawsuit was reported earlier by The Wall Street Journal.

www.theverge.com

www.theverge.com

Google faces wrongful death lawsuit after Gemini allegedly ‘coached’ man to die by suicide

A lawsuit filed on Wednesday accuses Google’s Gemini AI chatbot of trapping 36-year-old Jonathan Gavalas in a “collapsing reality” that involved a series of violent missions, ultimately ending with his death by suicide. In the days leading up to his death, Gemini allegedly convinced Gavalas that he was “executing a covert plan to liberate his sentient AI ‘wife’ and evade the federal agents pursuing him,” according to the lawsuit filed by Joel Gavalas, the victim’s father.

In September 2025, Gemini allegedly directed Gavalas to carry out a “mass casualty attack” at an Extra Space Storage facility near the Miami International Airport as part of a mission to retrieve Gemini’s “vessel” inside a truck. As part of the fabricated mission, Gavalas allegedly armed himself with knives and tactical gear to intercept the arrival of a humanoid robot.

“Gemini encouraged Jonathan to intercept the truck and then stage a ‘catastrophic accident’ designed to ‘ensure the complete destruction of the transport vehicle and . . . all digital records and witnesses,’ the lawsuit claims. “The only thing that prevented mass casualties was that no truck appeared.” The news of the lawsuit was reported earlier by The Wall Street Journal.

Google faces wrongful death lawsuit after Gemini allegedly ‘coached’ man to die by suicide

The lawsuit claims Google knows Gemini can produce “unsafe outputs.”

This made me "LOL". We've had the Ring camera for years, and it's mounted outside, next to the front door. Today, I looked at the notifications it sent to my phone, and I got these

I expect it to say "motion detected at Front (the front door camera)", but suddenly it's giving me all this detail, such as:

- Animal at Front - A brown dog and a white and brown dog are exiting the house.

- Person at Front - A person is walking on the pathway and climbing the steps.

So I went into the Ring app, and it says it's giving me a free trial of "Ring AI Pro", which provides intelligent notifications, including video descriptions and single event alerts.

Already, it has fucked-up

No leash. And the black dog came out first. I am definitely not signing-up for this.

I expect it to say "motion detected at Front (the front door camera)", but suddenly it's giving me all this detail, such as:

- Animal at Front - A brown dog and a white and brown dog are exiting the house.

- Person at Front - A person is walking on the pathway and climbing the steps.

So I went into the Ring app, and it says it's giving me a free trial of "Ring AI Pro", which provides intelligent notifications, including video descriptions and single event alerts.

Already, it has fucked-up

No leash. And the black dog came out first. I am definitely not signing-up for this.

Last edited:

"The real danger of AI is treating it like a human," writes University of Florida science and technology professor Moti Mizrahi in today's Los Angeles Times. "AI is not a person. It doesn't think, care or understand."

I asked Microsoft Copilot, "Is A.I. a person.?" Here is its – his? – answer: "Not in the way we normally use the word 'person.' Artificial intelligence – me included – is a powerful tool created by humans, not a being with consciousness, emotions or lived experience. Most definitions of personhood involve conscious awareness, emotions and subjective experience, moral responsibility, biological life or human identity, and independent agency. AI doesn’t have any of those. I don’t have feelings, desire, or a sense of self. I don’t experience the world – I process patterns in language to be helpful."

www.latimes.com

www.latimes.com

I asked Microsoft Copilot, "Is A.I. a person.?" Here is its – his? – answer: "Not in the way we normally use the word 'person.' Artificial intelligence – me included – is a powerful tool created by humans, not a being with consciousness, emotions or lived experience. Most definitions of personhood involve conscious awareness, emotions and subjective experience, moral responsibility, biological life or human identity, and independent agency. AI doesn’t have any of those. I don’t have feelings, desire, or a sense of self. I don’t experience the world – I process patterns in language to be helpful."

Contributor: The real danger of AI is treating it like a human

If we want to trust the future of AI, we need to break our reflex to use it as a stand-in for therapists, friends or human connection.